When was the last time you used a landline?

We’ve moved gradually to cellphone and non-phone communication networks over the past few decades. Our old copper-line infrastructure sits largely unused, and now companies like Verizon are moving to scoop up this valuable and unused copper while forcing users onto a fiber network. My parents upstate finally made the switch a few years ago, as their old landline (one of few numbers I remember) only received calls from telemarketers. Since then, however there have been several storms which wipe out their power. After such a storm, their phone’s charge percentage serve as a countdown to total isolation.

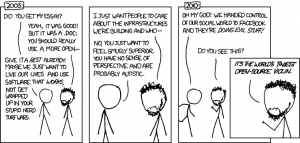

It’s not just that “new isn’t always better”, but that the new can make the old worse. The Network Effect is a catch-22 which makes a service valuable only once a significant number of people use it. The inverse, the “No Network Effect” means that even if you don’t like cell phones or emails or whatnot, you WILL upgrade or be removed from society.

xkcd 743 from 2010(!)

This concept is an important addition to the readings, particularly when we think of questions of consent. We may know about algorithm bias, digital redlining, and disparities in how infrastructure services the privileged v the vulnerable. The current platforms are unethical, but what good does knowing do us as individuals? Even if we don’t wish to consent to the current network, it is arranged so that the alternative is being rejected by society.

The questions I tend to focus on in terms of digital pedagogy are (1) how do we build/adopt inherently ethical networks (e.g. FLOSS)? And (2) how do we divest from or break unethical networks? Any educator plays an important role tools and platforms for their students, and these choices are inherently political. Teaching photoshop over GIMP; using SPSS over R; or accepting ‘.docx’ over ‘.odt’, are all choices which reduce or promote our students digital rights. How do we also balance this influence with what the Network Effects of industry standards?

A new question that came up for me this week was, to what extent are we also responsible to address these issues as they pertain to our students out-of-class lives? It’s one thing to not use facebook for class discussions, but our students are still on the platforms which don’t respect consent. (Platforms such as facebook go through great lengths just to avoid basic protections)

While this discussion is not at all relevant to most of our fields, should we dedicate some class time to it regardless? Similar to questions of litteracy, the curriculum fails to address these concerns anywhere along k-PhD.

I suspect I will in the future, if for no other reason than to justify why I am asking for “.odt” files. I’m interested in your feedback on what feels useful and what you imagine students would stick to, so I’ve prepared an example of a mini-cryptoparty-worksheet I might present to future students (in addition to discussing the issues above).

Have fun 🙂

Cryptoparty Worksheet

Even if you don’t care about your data, you should care about your shadow! Watch:

Data detox basics:

- Go through settings! The defaults are always worse than they need to be.

- Install these browser extensions: HTTPS everywhere, Privacy Badger, Terms of Service; Didn’t read, and uBlock Origin (Firefox/Chrome)[not the other adblockers out there!]

- Avoid using your phone! Computers give you much more control.

- Keep detoxing here: https://datadetox.myshadow.org/detox

Before using any tool or service, ask yourself:

- Is it free and open source, or is it proprietary?

- What do you know about the company which owns the service?

- What are the terms of service?

“Lost in the small print” Helps make things more readable- Has the tool been security audited?

- Who carried out the security audit?

- Don’t like the answers? Find alternatives!

https://prism-break.org/en/

https://alternativeto.net/

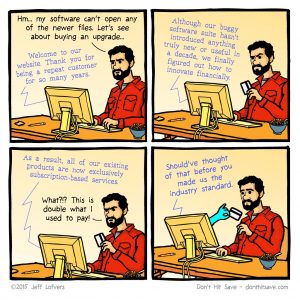

Having been involved in some FLOSS communities–myself a little, a project I was involved in a little more, and adjacent to by marriage, I feel fairly knowledgeable about open source ethos. But as someone who was mocked at a Drupal conference for running Windows (work laptop, not everyone has the will/way/time to install Linux), and further, someone who is increasingly sympathetic to the need for ease as I get older, I want to explore a little the comfort in using the tools you’ve always used, or the tools that are familiar and maybe easier to acclimate to.

I don’t want to ignore the existence and role of white women, trans folks, and older people and POC in FLOSS communities, but I feel like the orthodoxy can be off-putting. I’ve struggled with OpenOffice.org and Crabgrass and found that working with and organizing through those tools can be so frustrating that I can’t do what I have to do.

OTOH maybe we shouldn’t be trying to do more than we can do with ethical software? I think that sometimes about not having enough time in the day, or wishing I had an assistant for my three-in-one job. I think no one should have so much work that they need an assistant. Oops. I’m getting off track. What I’m thinking about is that right now, as things stand, I shamefully am willing to trade a fair amount of privacy for convenience. We all have our limits, I guess.

My cousin is a Silicon Valley engineer, and after visiting her home, with it’s four Alexa devices, and seeing how she and her partner and sibling follow each other’s phones on GPS and order Uber delivery (whatever that’s called), and scan wrappers and boxes when they’re finished to reorder automatically from Amazon, I came up with the phrase “conspicuous convenience” to describe their lifestyle. I’m not knocking them, but if they lived on Caprica, because of all the data they share, they’d be easy to Cylonify.

I don’t want to seem to be, or actually be arguing for proprietary systems that profit from our data. I’m just thinking about what/when/how much I want to share, for my own convenience, and with consent (not the clicking on a terms of use kind of consent). I hate shopping, so if the internet knows I want medium petite black clothes with pockets, what’s the risk, right?

I feel like that’s what data privacy trainings are missing–either, what is the risk to the average person, or making clear what the greater risk is to society. I can’t remember if I had this revelation in this class, or while talking to friends (maybe even Jessamyn West, come to think of it): we need to think not of the harm to individuals, or even “the children,” but to society in general. Am I going do my part in saving society by using open formats, the same way I do by drinking tap water and using cloth bags? With the environment example, is doing our part too late at this point???

Sorry to be so depressing. This week’s readings, though.

I really appreciate your comment Jenna, and agree it is important to reflect on your points. In particular, “we need to think not of the harm to individuals, or even ‘the children,’ but to society in general”. I prefer this framing as it is analagous to “herd immunity” in virology. Protection isn’t as much about an individualist protection as it is about a social one which protects the most vulnerable among us.

I think a tension I have trouble squaring is with how to creation of new normals. The onus is not on us but on the companies and institutions involved (including state regulation), but it is hard to imagine any shift on that level happening soon. I really pledge to the Holistic Security handbook here, and say it really is about trusting individuals are experts in their own context and can weigh the costs/benefits of xyz tradeoffs between security, convenience, and an abstract “social good”.

With all that said, I do feel myself straying from the Holistic Security handbook slightly when it comes to the classroom. “Out there”, folks have to obsess over damage-control and are stuck in an unethical tech ecosystem. In the class however, I wonder what opportunities there are to establish these more ethical tools just to let people play around with it. For similar reasons, I care about having a very horizontal classroom where students participate in a mini-democracy. Not as a solution in itself, but maybe as an escape? As a form of harm reduction? I’d never force students to install Ubuntu or buy a VPN or only use ONE setting configeration *I* chose…but I’d be very tempted to give them all an ubuntu laptop to use in class, given infinite resources. This sort of experimental classroom exposure is what got me exposure to tools like R (and now WordPress). Ultimately using ‘.odt’ instead of ‘.doc’ won’t exactly be a lifechanging moment of liberation, but maybe an exposure to LibreOffice will make folks question why Word is the standard?

Then again I do take your point that this may itself create some inequality within the classroom, since even if all the students had the “digital litteracy” needed it would expose them to the cissexism/racism/etc of the tech community*. It also does create a secondary workload for students, where they need to learn about the course content AND how to change settings and use new things. Reflecting on your points it does highlight to me the importance of students having a say in these choices, and having these changes be compelling but not compelled

* As an asside– even citing “GIMP” as an example felt weird above, as the folks maintaining the program refuse to rename it. It would be a nightmare to have an outright offensively named program on the syllabus -__-.

Yes to all of that! The “herd mentality/vaccination” analogy is exactly what I meant. I’m thinking back to when I went to a PGP workshop, and we talked about using it all the time, and not just for at-risk conversations. But…it feels like a fair amount of work, compared to using Signal, for example.